An Investigation Into England Euro 2020 Social Media Abuse Using Open Source Intelligence :

Following the targeted racist attacks towards members of the England football team after the final of Euro 2020, Neotas have conducted an investigation into the online abuse(England social media abuse).

After Twitter had publicly shared the results of their “proactive” action taken following the final, we focused on Twitter and analysed whether their clean-up effort had been sufficient in identifying and punishing the harmful behaviour.

A Momentous Occasion Marred

The 2020 EURO UEFA European football championships, commonly referred as EURO 2020, was held from 11th June 2021 to 11th July 2021 across various locations in Europe.

The Final match was played on 11th July 2021 at Wembley Stadium, London between England and Italy. Italy won the tournament, beating England 3-2 in a penalty shoot out following a 1-1 draw after extra time.

For England, 5 players took the penalties; Harry Kane and Harry Maguire were successful in scoring. However, Marcus Rashford, Jadon Sancho and Bukayo Saka missed their spot kicks, resulting in Italy winning the championship.

In a game that was marred by multiple incidences of fan violence and unruliness in the build up to and during the match, the tone was lowered further when racial abuse was aimed at the three players who missed their penalties.

Such was the escalation of the story, members of the Royal Family, UK Prime Minister Boris Johnson and other government agencies weighed in to condemn the attacks, demanding that action was taken to punish the offenders.

The Metropolitan police opened an investigation on the offensive and racist social media posts that has since seen 11 people charged. The social media platforms themselves continue to claim to have responded in the strongest way possible, with Twitter removing more than 1,000 tweets initially and permanently suspending multiple accounts.

Twitter has since claimed to have removed approximately 1,600 Tweets, accounting for around 90% of abuse. Our investigation immediately discovered an additional 70 that were deemed abusive, racist or threatening in some way.

Platform Responses

Previous meetings with the major football associations of England had resulted in Facebook essentially leaving the onus on the players and clubs to protect themselves, rather than the platform proactively protecting its users. The platform has since made changes that have been deemed insufficient by players and associations thus far.

While Twitter is just one of a number of major platforms to have been used to facilitate the abuse, it has shouldered much of the attention so far and has shared the most robust and open responses to date. Twitter published this update on the 10th August, following continued public discourse about the online abuse:

“Following the appalling abuse targeting members of the England team on the night of the Final, our automated tools, which had been in place throughout Euro 2020, kicked in immediately to identify and remove 1622 Tweets during the Final and in the 24 hours that followed.

While our automated tools are now able to detect a majority of the abusive Tweets we remove, we also continue to take action from reports. New vectors of abuse are ever-emerging, which means our system is having to adapt on an ongoing basis. Therefore, to supplement our efforts, trusted partners are able to report any further Tweets directly to our front-line enforcement teams. In total, over 90% of the Tweets we removed for abuse over this period were detected proactively.”

Twitter’s own analysis into the violating accounts is ongoing, but their initial findings concluded:

- The UK was – by far – the largest country of origin for the abusive Tweets removed on the night of the Final and in the days that followed

- That ID verification would have been unlikely to prevent the abuse from happening – as the accounts we suspended themselves were not anonymous

- Only 2% of the Tweets we removed following the Final generated more than 1000 Impressions

The update from Twitter has helped continue the conversation relating to the abuse faced by the England players at Euro 2020, as well as the more general issue of online abuse.

Twitter have announced the trial rollout of the following actions to help curb the racist interactions on their platform:

- A new feature that temporarily autoblocks accounts using harmful language

- Reply prompts, which encourage users to revise their replies to Tweets when it looks like the language they use could be harmful

What isn’t clear is how these tools include evaluation of visual media such as images, videos and emojis – something which was prevalent and consistent amongst our limited searches.

Our Insights – Focus On Twitter

Our data analysts are experts in identifying behavioural risks hidden in online data. This can, and often does, include aggressive, discriminatory and abusive behaviour linked to social media profiles. The activity can be active (posted or shared by the subject in question) or passive (linked to, shared or associated with the subject in question).

The data we search is called open source intelligence (OSINT) and is 100% publicly available, however only experts like Neotas have the skillset to interrogate it fully and provide adequate context.

Following Twitter’s announcement that they had removed 90% of abuse within a few days of the final, we conducted a limited search and uncovered more than 40 profiles still active on Twitter. Those accounts that had shared approximately 70 tweets containing discriminatory abuse directed towards the England team. This was following the initial response from Twitter and after the police had opened their investigation.

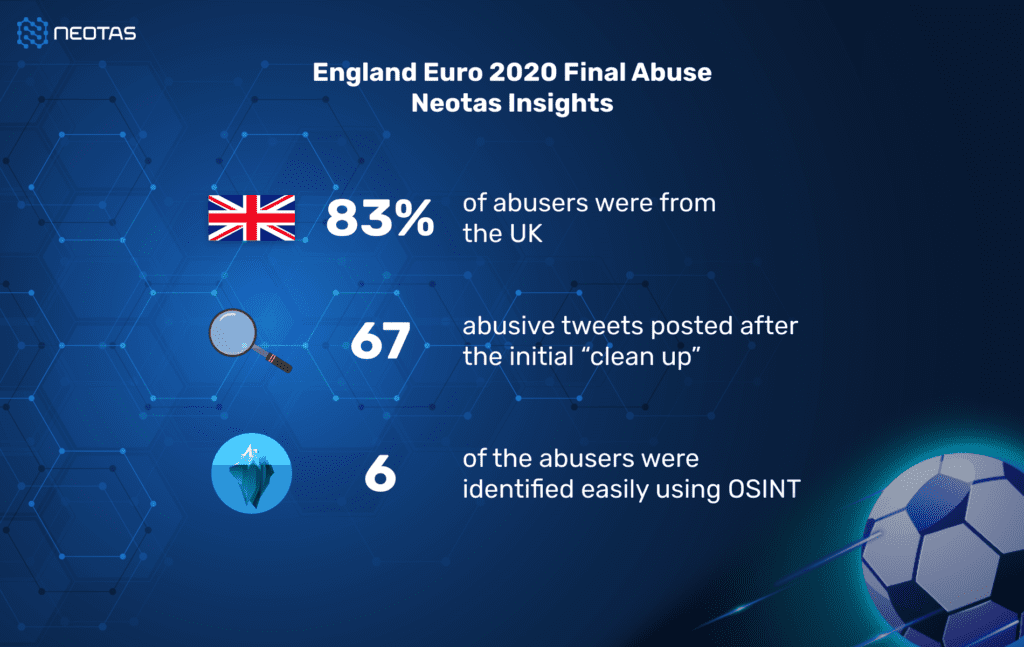

Of the abusive accounts uncovered in our findings, 83% of them were based in the UK – a figure that correlates directly with Twitter’s declaration that the vast majority of attacks were from UK accounts.

More than 90% of the abusive tweets we discovered were sent after the initial “clean up” from Twitter. While the recent update suggests that the platform is continuing to investigate, our findings suggest that 95% of the accounts we discovered remain active with the vast majority of them still containing the offensive content.

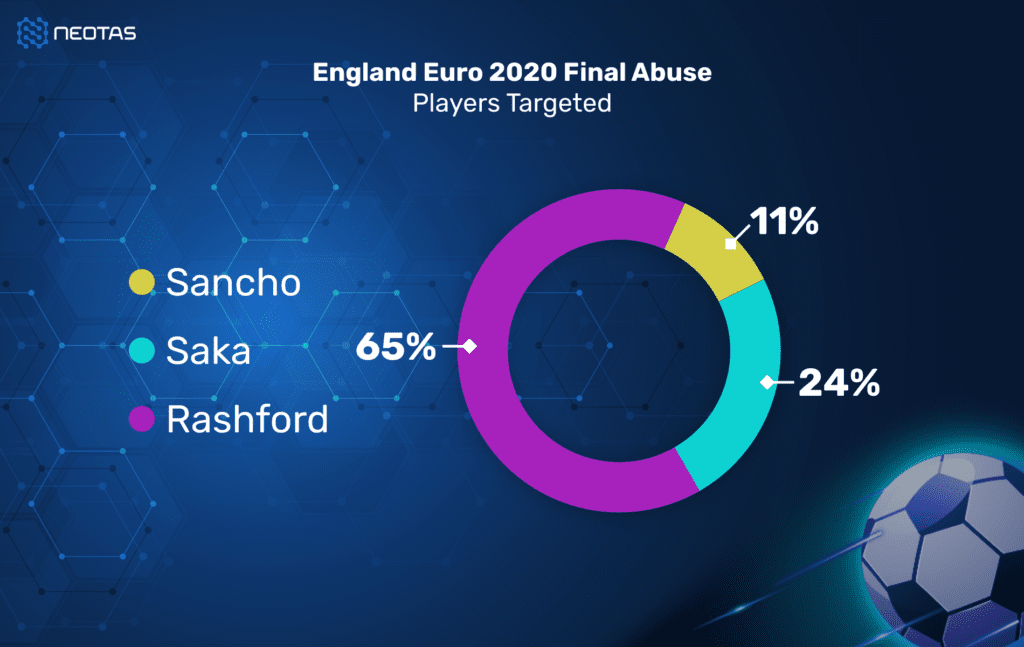

While there was a lot of general criticism and aggression directed towards the England team following their crushing defeat, here is a breakdown of the abuse faced by the three players who missed their penalties:

Racism was the most common theme amongst the responses found, with a large number of accounts including visual content such as images, videos and emojis to emphasise their aggression. Marcus Rashford, who was the overwhelming target of the responses we found, also faced repeated attacks over his charitable work and philanthropy.

In-line with Twitter’s announcement, a large number of the assailants were easily discoverable. Using OSINT, we were able to fully identify at least 6 real people behind the attacks, including information including their real names, contact details, addresses and places of work. With further investigation, we are confident that we would be able to discover more.

The results uncovered as part of our research represent just a small section of the total online activity following the final and is just the tip of the iceberg when it comes to our data interrogation capabilities. While for this exercise we focused primarily on Twitter, there are undoubtedly similar cases across other platforms including Facebook and Instagram. OSINT can easily be harnessed to interrogate all online activity, including social media channels.

How tech can be embraced by both sides of the coin when it comes to sport and abuse

Repeatable Cycle – What Action To Take

The attacks and vitriol directed at the England football team at Euro 2020 was just the latest in a seemingly never-ending cycle faced by sports stars and by the wider online community.

Debates continue as to whether using ID to set up social media accounts is the way to tackle this endemic problem, but what’s clear is that many of these users can already be tracked. Our Director Ian Howard wrote previously of the need to embrace technology to fight the issue, arguing for the use of open source intelligence (OSINT) to help detect, verify and punish those caught being abusive on the platforms.

It is important to note that almost all of the accounts identified by Neotas and by Twitter were traceable, while many are still active and just 11 have been charged to date.

In real terms this means that many of those who sent out vile, abusive messages following the final returned to work the following day without employers knowing about their true character.

Businesses have a duty to protect their employees from risk and should use all available methods to help monitor and safeguard their staff’s wellbeing.

The use of employment screening tools like social media screening can help identify high-risk behaviours and will lower the risks of abusive behaviour within the workplace. These tools can and should be used to screen prospective hires, particularly those in senior roles, as well as current employees.

England social media abuse :

Addressing social media abuse in England: Examining the prevalence, consequences, and ongoing efforts to combat online harassment and promote a safer digital landscape

To find out more about social media screening or to discuss our findings, please schedule a call with our team here.

Tags :England social media abuse

New Whitepaper and Checklist

New Whitepaper and Checklist